2022 was truly a breakout year for generative artificial intelligence (AI), which can produce fluent textual responses to questions, draft stories, and generate images on demand.

But generative AI really came to the fore for investors after Microsoft (MSFT) made the decision in January of this year by to invest $10 billion in OpenAI, the creator of the chatbot sensation ChatGPT.

Microsoft stock itself jumped more than 12% shortly after the deal announcement, adding nearly $250 billion to the company’s market cap. The rise was based on hopes that the underlying technology will live up to the prediction by Satya Nadella, the company’s CEO, that it would “reshape pretty much every software category that we know.”

But the true winner of the AI wars won’t be Microsoft. Here’s who you should be looking at instead…

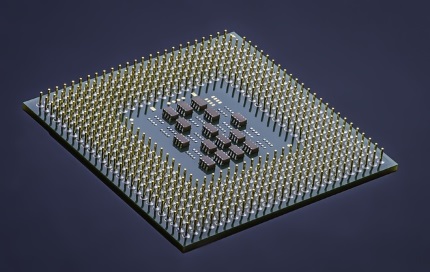

The AI Wars are About Chips

It’s little wonder that an all-out struggle seems to have broken out to see what company will dominate this brave new world of AI. The players include Microsoft, Google, and a host of others.

However, Wall Street thinks it already knows who the ultimate winner will be…the ‘picks and shovels’ companies that manufacture all the “weaponry” the combatants will be using.

And what are these weapons? They are the advanced chips needed for generative AI systems, such as the ChatGPT chatbot. According to Richard Waters of the Financial Times, “…investors are not betting on just any manufacturer” for the production of these chips. In a February 17 article, he pointed out that Nvidia Corp.’s (NVDA) graphical processing units (GPUs) dominate the market for training large AI models. The company’s shares have surged 45% already in 2023.

And as Waters pointed out, the stock has nearly doubled since its low in October. That was when Nvidia was in investors’ doghouse due to a combination of the crypto bust (crypto miners widely used Nvidia’s chips), a collapse in PC sales, and a bungled product transition in data center chips.

It does look like GPUs will be critical in this war to dominate in AI. Besides the job of training large AI models, GPUs are also likely to be more widely used in inferencing—the job of comparing real-world data against a trained model to provide a useful answer.

Waters quoted Karl Freund at Cambrian AI Research, who said that, until now, AI inferencing has been a healthy market for companies like Intel Corp.’s (INTC) that make CPUs (processors which can handle a wider range of tasks, but are less efficient to run). However, the AI models used in generative systems are likely to be too large for CPUs, requiring more powerful GPUs to handle the task.

Just five years ago, some on Wall Street predicted that GPUs were yesterday’s news and would not be needed in AI as much as competing technology like ASICs (application-specific integrated circuits).

Yet, here we are today and Nvidia is sitting on top of the mountain. Much of that, as Waters explains, is thanks to the company’s Cuda software, which is used for running applications on Nvidia’s GPUs. Nvidia also has a new product hitting the market at just the right time, in the form of its new H100 chip. This has been specifically designed to handle transformers, the AI technique behind recent big advances in language and vision models.

According to Nvidia, a transformer model is a neural network that learns context, and thus meaning, by tracking relationships in sequential data like the words in this sentence. Transformer models apply an evolving set of mathematical techniques, called attention or self-attention, to detect subtle ways even distant data elements in a series influence and depend on each other.

Why Buy Nvidia?

Of course, there are competitors infringing on Nvidia’s space, including all the “Big Tech” companies. Waters pointed to how Google decided eight years ago to design its own chips, known as tensor processing units, or TPUs, to handle its most intensive AI work. Amazon and Meta have followed a similar path.

At Microsoft, its success in generative AI owes a lot to the specialized hardware—based on GPUs—it has built to run the OpenAI models. However, in the chip industry, rumors have been swirling lately that Microsoft is now designing its own AI accelerators.

Despite all of this, I suspect that five years from now, the company will still be a major force in AI, building on its current first-mover advantage in chip solutions for AI.

Buy NVDA on any tech stock weakness, in the $180 to $220 range.